Brewing With Kubernetes

☕:kubernetes:March 4th, 2018

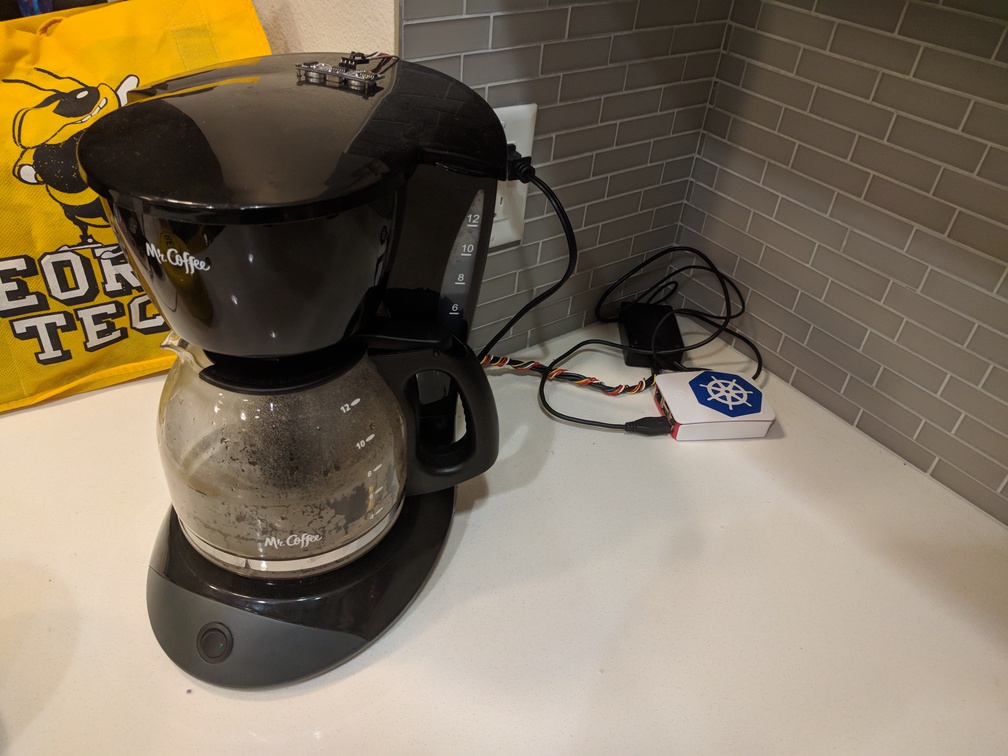

My coffee pot is now a node in my home Kubernetes cluster, and it’s awesome.

More specifically the Raspberry Pi wired to my CoffeePot controller now runs on Kubernetes thanks to kubeadm in a cluster with the node running my site.

More specifically the Raspberry Pi wired to my CoffeePot controller now runs on Kubernetes thanks to kubeadm in a cluster with the node running my site.

I set up a public live status page displaying all of the sensor data as well as the last update time, with control restricted to users on my local network. I’ve done this simply by proxying the status endpoint and not the rest of the coffeebot service. I’m also busily adding back support for scheduling brewing as well as alarms via the Google Calendar API, listening to a dedicated coffee calendar on my personal account.

✎ Update • September 2018I’ve migrated my site to static hosting for unrelated reasons and taken the status page down for the moment.

Background

This might look a little ridiculous, but deploying (and debugging!) software updates to my coffeepot with kubectl has been fantastic.

If you know me then you know that I’m not a morning person and that I tend to sleep in more on the weekends, so a simple same-time-every-day alarm clock type coffee maker simply wasn’t cutting it. When I needed to build a “smart device” for a college course (CS 3651 Prototyping Intelligence Appliances) I created the original incarnation of this based around an Mbed and an old Android phone I had on hand. The phone since died, so I finally decided to fix this up and upgrade to a full Linux box, and here we are.

The original Mr. Coffee Bot

Getting the Raspberry Pi Into my Cluster

Installing Kubernetes / kubeadm was straightforward enough, I just followed the official instructions. However I hit a few small roadblocks related to multi-architecture support:

- I was originally using Calico for my overlay network, which only supports amd64, not arm.

I opted to solve this by re-creating my cluster, this time using Weave Net which has out of the box support for amd64, arm, arm64 and ppc64le.

kube-proxywas deployed with a DaemonSet pointing to an amd64 specific image, and couldn’t run on the Raspberry Pi.

I solved this by editing the existing DaemonSet to have a nodeSelector for beta.kubernetes.io/arch: amd64 and creating a copy with arm substituted for amd64 throughout the DaemonSet based on this gist.

These two rough spots are definitely not ideal, but also weren’t particularly difficult to work around. Hopefully we’ll fix #2 in particular by publishing all of the core components with multi-architecture images.

✎ Update • August 2021The kube-proxy image has since been fixed upstream, these images are properly multi-arch and kubeadm references the manifest list instead of architecture specific images. This workaround is no longer required.

Leveraging Kubernetes

I use Kubernetes to:

- check the logs from anywhere with

kubectl logs ... - update by pushing a docker image and

kubectl apply -f coffeebot-deployment.yaml - connect the software’s API to my web server by creating a Service I can “magically” access at

http://coffeebot-service.defaultfrom any node in the cluster - automatically restart the service when it fails for any reason

- count restarts (useful for verifying that the service hasn’t been failing)

- expose a separate local-users-only control plane by exposing a different container port with a nodePort bound to 80 (on a different service not publicly exposed or proxied)

- conveniently and securely access the service(s) from anywhere with

kubectl proxy

Safety ⚠️

It’s worth noting that I did take a number of safety precautions when connecting an electric heater to the internet, Kubernetes powered or otherwise:

-

The public API is proxied by my existing webserver running on a different node and is read-only. I originally intended to implement RFC 2324 but this seemed… safer, and the only fun status code is actually for teapots anyhow.

-

Web controls are only exposed on a completely different service / port that is only available on my private WiFi / LAN.

-

The heater has an original-from-manufacturer thermal fuse inline with the power mounted to the hotplate that will blow if temperature ratings are exceeded.

-

The actual microcontroller controlling the heater power (via solid state relay) has a hardware watchdog timer that will reboot it after five seconds passes without processing a valid command from the client (over USB serial)

Similarly the heater is explicitly disabled on boot, and every time one second passes without receiving an enable command from the client

- The original power switch is still part of the circuit, which can be shut off manually and has a visible light when power is flowing.

Conclusion

Using Kubernetes standardizes deploying software, managing network services, and monitoring applications. It turns out all of these things are very handy for over-re-engineering your $18 coffee maker.

And yet: Is this overkill? Absolutely. 🙃

I hope this will be useful and / or interesting to someone. I’m off to brew some more coffee ☕️